There is a particular moment that arrives in almost every executive conversation about artificial intelligence. The initial enthusiasm fades, the vendor demonstrations have concluded, and the leadership team sits in silence around a boardroom table, staring at a budget line and realizing they are not actually sure what they are buying or why.

This guide is written for that exact moment.

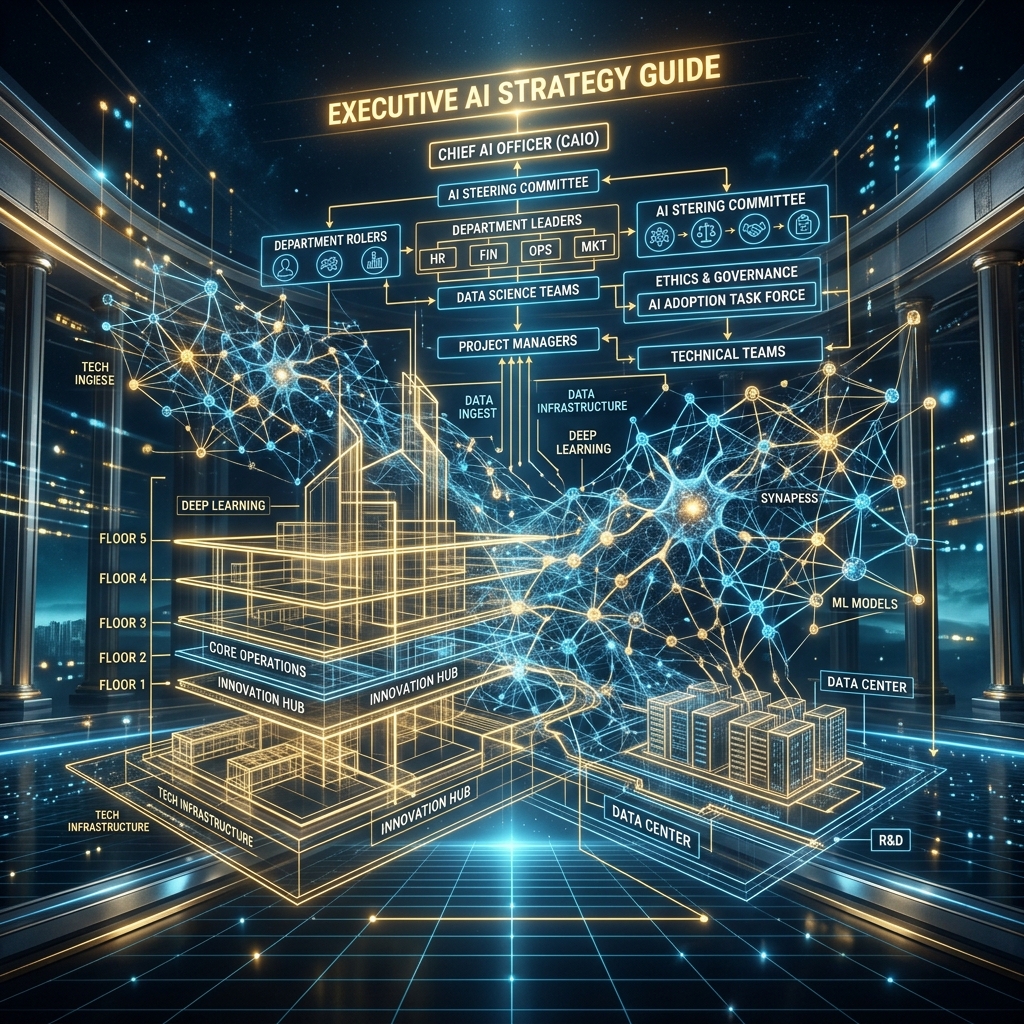

The complete AI strategy for an organization is not a single document. It is a structured architecture built across five interconnected disciplines: readiness assessment, operating model design, governance construction, phased adoption sequencing, and decision intelligence. Most organizations approach one or two of these disciplines in isolation and wonder why their results are disappointing. True transformation requires all five to operate simultaneously and coherently.

What follows is a definitive executive reference. It synthesizes the frameworks, models, and strategic principles that inform lasting AI transformation. It is intended to serve as a thinking tool for leaders who take the subject seriously.

Part One: Understanding What AI Strategy Actually Means

Before any organization can build a credible strategy, it must agree on what the term means. In most boardrooms, it does not mean much at all. It refers vaguely to "using AI more," which is equivalent to declaring a nutrition strategy of "eating better."

A genuine AI strategy is an organizational commitment to a specific economic outcome, enabled by the disciplined integration of intelligent systems into the operational model. Every word in that definition carries weight.

It is a commitment, not an experiment. Experiments are reversible. Strategy is a deliberate resource allocation that forecloses other options. If your leadership team is still describing its AI initiative as a pilot program eighteen months in, it does not have a strategy. It has an extended proof of concept with a marketing budget.

It targets a specific economic outcome. Revenue growth, margin protection, competitive differentiation, or decision speed: the strategy must be traceable back to a financial reality. If you cannot articulate the number that changes when the strategy succeeds, the strategy is decorative.

It is about the operational model, not the technology. The algorithm is a lever. The operating model is the machine it moves. Organizations that focus exclusively on selecting the right algorithm while leaving their workflows, incentive structures, and data architecture unchanged are pulling levers attached to nothing.

Part Two: The AI Readiness Assessment

No transformation program should begin without a structured, honest readiness assessment. This is not a technical audit. It is an organizational stress test across four dimensions.

For a deeper exploration of a deeper exploration of the four readiness dimensions, read AI Readiness Assessment.

Data Maturity: Is your organizational data structured, centralized, and accurate? The question is not whether you collect data, but whether it is usable. Most organizations collect enormous volumes of information housed in incompatible formats and controlled by different departments with conflicting naming conventions. A readiness assessment forces the organization to ask a threatening question: if we connected a machine learning pipeline to our existing data right now, what would it learn? The honest answer is usually chaotic and humbling.

Process Visibility: Are your operational workflows fully digitized and auditable? Every analog handoff, every undocumented exception, every informal approval route that exists outside the system represents a blind spot the algorithm cannot navigate. Readiness requires mapping not just the official process flow but the invisible workarounds that employees have engineered over years of practical survival.

Cultural Readiness: Are your managers capable of making decisions using probabilistic outputs rather than historical intuition? This is frequently underestimated. A leadership team that expects certainty from a statistical model, and rejects the tool the first time it generates a recommendation they disagree with, will never successfully deploy AI at scale. Cultural readiness means the organization has trained its people to tolerate and reason with uncertainty.

Governance Readiness: Does a framework exist that can govern the ethical, legal, and operational risks associated with automated decisions? If the answer is no, the organization is not ready to move beyond the sandbox. Deploying production-grade intelligence systems without governance infrastructure is not bold. It is reckless.

Part Three: Designing the AI Operating Model

The operating model represents the organizational architecture that makes intelligent deployment possible. It answers the question: how does the company actually function when AI is embedded into its core workflows?

For a deeper exploration of a detailed breakdown of the operating model redesign, read The AI Operating Model.

The answer requires restructuring four fundamental organizational elements.

From Departmental Silos to Cross-Functional Pods. The standard corporate structure optimizes for departmental accountability. Finance owns the numbers. Marketing owns the brand. Operations owns the logistics. This clean separation is fatal to AI deployment, which thrives on integrated, continuous data flows across all three simultaneously.

High-performing AI operating models replace departmental ownership with cross-functional deployment pods. A pod for revenue intelligence includes a data scientist, a senior sales director, a risk officer, and a legal counsel. They share accountability for both the technical reliability and the commercial outcome of the model they govern.

Elevating AI Governance to Board Level. Governance cannot be delegated downward. When an automated pricing model causes a significant commercial error, the question of who owns the liability will travel upward at speed. If the governance structure lives inside the IT department, it will be overwhelmed by the velocity of that accountability conversation.

The governance function must sit at board level with direct CEO access. It must possess formal veto authority over any proposed AI deployment that crosses defined ethical or financial risk thresholds. It must include members who understand both the technical architecture and the corporate legal landscape.

Restructuring the Decision Hierarchy. In a traditional operating model, decisions travel upward to the executive layer. In an AI-augmented model, decisions travel outward to the frontline operators who are closest to the data. The executive layer shifts from making operational micro-decisions to designing the guardrails within which autonomous systems and frontline operators make those decisions independently.

This inversion is deeply uncomfortable for traditional leadership. It requires executives to relinquish operational control in exchange for strategic leverage. The organizations that accomplish this transition cleanly gain enormous competitive speed.

Rewiring Human Incentives. Operating models ultimately run on human behavior, which is shaped by incentive structures. If your compensation framework rewards individual output and penalizes collaboration, the cross-functional pod model will fail regardless of the technology deployed. Every performance metric, bonus structure, and promotion criterion must be audited and revised to align with the behaviors the new operating model demands.

Part Four: Building the Governance Framework

Intelligent systems carry liability in proportion to their influence. A chatbot that answers frequently asked questions carries minimal risk. An algorithm that approves or denies commercial loan applications carries enormous risk, and must be governed accordingly.

For a deeper exploration of the full hostile review matrix and liability architecture, read AI Governance for Organisations.

A complete governance framework operates across three layers.

Pre-Deployment Risk Classification. Before any model enters production, it must be formally assigned a risk tier. Low-risk models operate with minimal oversight. High-risk models require documented explainability standards, audit trails for every significant automated decision, and mandatory human review at defined checkpoints. The classification must be conducted by the governance board, not the engineering team that built the system.

Continuous Operational Monitoring. Deployed models are not static. They drift. The behavioral patterns they learn from last year's data may generate increasingly harmful recommendations as the operating environment changes. The governance framework must enforce scheduled penetration testing and statistical drift audits. Any model showing measurable deviation from baseline performance standards must be flagged, reviewed, and if necessary, suspended.

Clear Human Override Protocols. Every deployed system must have a documented shutdown procedure and a clear chain of human authority empowered to execute it. The governance board must be able to freeze a production system within minutes of identifying a serious anomaly. If the shutdown procedure requires a multi-week approval process, the framework is decorative rather than functional.

Part Five: The Phased Adoption Sequence

Strategy without sequencing is wishful thinking. The adoption sequence determines the order in which capabilities are deployed, ensuring that each phase builds the organizational muscle required for the next.

For a deeper exploration of the complete five-phase adoption sequence in depth, read AI Adoption Roadmap.

Phase One: Foundation and Literacy. Clean the data architecture. Map the invisible workflows. Train the management layer to reason with probabilistic information. This phase produces no visible algorithmic output. It is pure infrastructure investment, and it is the phase most organizations skip, which is invariably why their subsequent phases fail.

Phase Two: Internal Augmentation. Deploy intelligence tools to internal, low-stakes workflows first. Knowledge retrieval systems, internal document summarization, administrative scheduling automation. This phase builds organizational familiarity with non-deterministic software, trains the IT and governance teams in real deployment conditions, and generates the early wins needed to sustain political support for the program.

Phase Three: Customer-Adjacent Deployment. With internal confidence established, introduce intelligence augmentation to customer-facing workflows without removing human oversight. The system drafts the communication; the human reviews and approves it. The system generates the pricing recommendation; the account manager confirms it. Decision quality and speed both improve. The human remains in the loop and in control.

Phase Four: Bounded Autonomy. Within tightly defined parameters, authorize the system to execute decisions without explicit human approval. The parameters must be narrow, financially constrained, and continuously monitored. This phase is where the efficiency gains become substantial and measurable.

Phase Five: Strategic Decision Intelligence. At full maturity, the system transcends operational efficiency and participates in executive strategy. It models competitive scenarios, stress-tests capital allocation decisions, and identifies market opportunities that human analysis at scale cannot detect. The executive layer is augmented, not replaced. Human leadership focuses on direction and culture while algorithmic systems continuously optimize the operational pathway.

Part Six: Decision Intelligence as the Strategic Horizon

The organizations that have successfully navigated the first five elements of this framework arrive at a common realization. The most valuable thing an intelligent system can do is not write faster or process more documents. It is to systematically improve the quality of the highest-stakes decisions made by the people who run the company.

For a deeper exploration of the full strategic horizon article, read Decision Intelligence.

Decision intelligence is the discipline of building organizational systems that combine human judgment with continuous computational probability analysis. It is not about automating executive decisions. It is about giving executives a mathematically rigorous adversary that can challenge confirmation bias, model downside scenarios, and surface the invisible variables that human cognition consistently underweights.

The shift requires a genuine cultural commitment. Executive teams must be willing to have their intuitive convictions challenged by a statistical model in a formal setting. They must develop the discipline to document their reasoning when they override the machine's recommendation. And they must build the intellectual humility to update their priors when the data repeatedly contradicts their assumptions.

The Framework in Practice

The five disciplines described in this guide are not sequential stages to be completed and discarded. They are continuously operating organizational capabilities that require ongoing investment and leadership attention.

Data maturity degrades if poorly managed. Governance frameworks become stale if not regularly stress-tested. Operating models calcify if incentive structures are not revisited as the capability evolves. The organizations that sustain competitive advantage do not reach a finish line. They build the institutional discipline to continuously evolve.

For leaders who take this seriously, the path forward is clear. Assess your current readiness honestly, build your governance infrastructure before you need it urgently, redesign your operating model to reward the behaviors that intelligent deployment demands, sequence your adoption with patience and discipline, and keep your strategic vision focused on improving the quality of the decisions that ultimately determine where your organization stands in five years.

The technology will continue to improve, with or without your participation. The question is what kind of organization you intend to be when the acceleration reaches its full velocity.