Most companies approach artificial intelligence exactly backwards. They read headline after headline about the transformative power of generative models. They feel the defensive urgency to adopt or be left behind. So they do what corporate structures are designed to do: they issue a request for proposals, they buy software, and they force a deployment. Six months later, they review user metrics and wonder why nothing has fundamentally changed about how their business functions.

The problem is structural. They are treating AI as a discrete application you can install, rather than a foundational capability you have to build.

I regularly talk to executives who enthusiastically approve massive technology budgets based on polished vendor demonstrations. Those demos always look flawless because they run on perfectly structured, pre-cleaned sandbox data. The vendor's model answers every query with pinpoint accuracy. The charts generate instantly.

But real corporate reality looks nothing like a vendor sandbox. Real organizational data is messy, fragmented across disconnected legacy systems, heavily siloed between departments, and full of historical human errors. When you drop an advanced statistical reasoning engine on top of a broken, chaotic data architecture, you do not get better or faster decisions. You just automate your own corporate confusion at unprecedented speed.

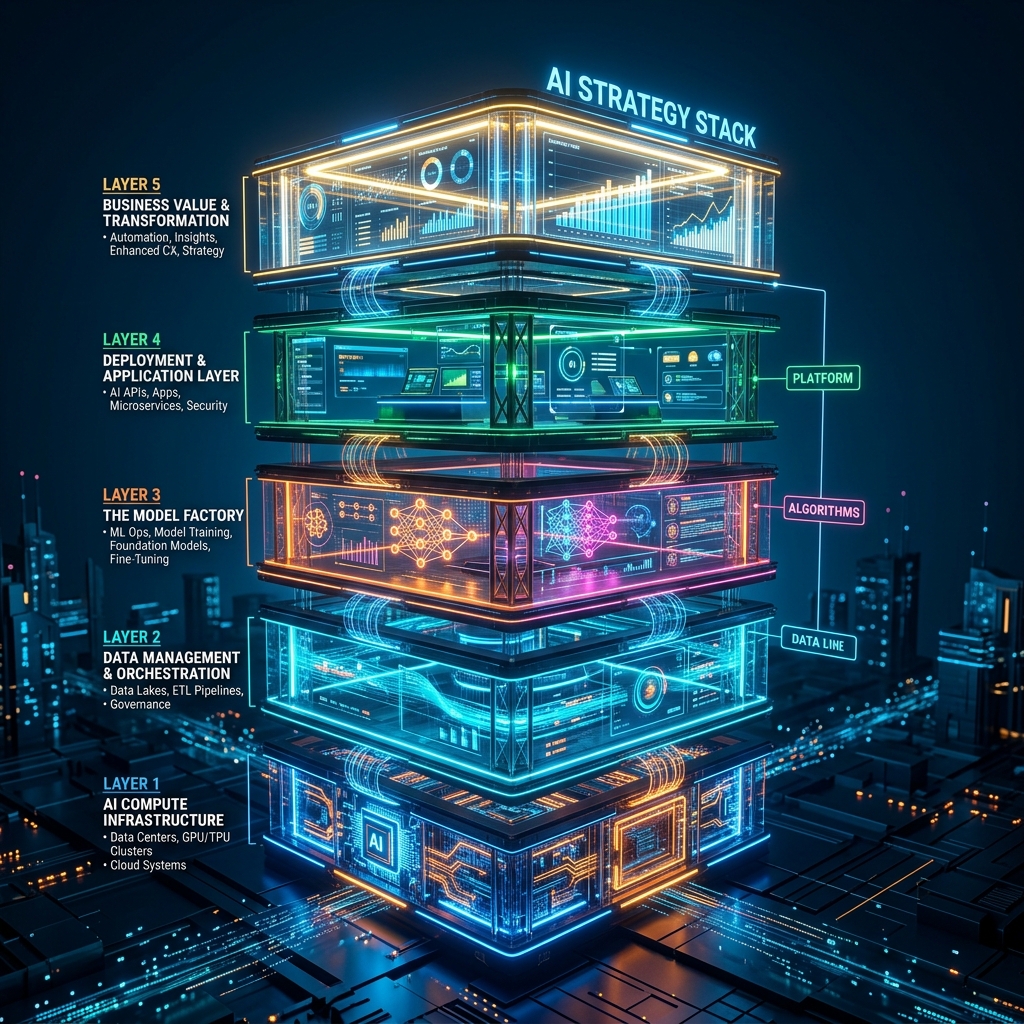

To actually capture value, organizations need to stop buying tools and start building a stack. The AI Strategy Stack is a sequential, proprietary framework consisting of five non-negotiable layers: infrastructure, data discipline, AI capability, decision intelligence, and the operating model.

When you understand the stack, you understand two things instantly. First, you cannot skip a layer without compromising the entire structure. Second, you cannot build them out of sequence.

Layer 1: Infrastructure and Compute Architecture

The foundation of any serious technological transformation rests on where the processing happens and how it scales. Many organizations default to external public cloud providers without fully calculating the long-term operational and strategic costs.

For a deeper exploration of readiness assessment, read AI Readiness Assessment.

Cloud infrastructure is highly accessible and eliminates early capital expenditure, but it comes with an immediate dependency tradeoff. You surrender structural control over data residency, latency constraints, and cost predictability in exchange for operational convenience. For large enterprises, financial institutions, or government bodies handling sensitive citizen data, treating standard cloud deployment as the default or only option is strategically flawed.

Data sovereignty matters. Regulations change. If your operating jurisdiction updates its compliance, privacy, or security policies overnight, your baseline architecture must be clean and localized enough to adapt without forcing you to rebuild your capability from scratch.

The foundational question executives need to answer at this layer is not just "where do we host this software?" They need to ask who ultimately owns the compute path, what the latency tax will be on high-volume queries, and how reliant they are willing to be on a single foreign provider's pricing decisions. If you do not own your infrastructure strategy, you do not own your AI strategy.

Layer 2: Data Discipline and Information Hygiene

This is the most boring layer of the stack. It is also the exact place where seventy percent of corporate AI initiatives fail quietly.

If your core operational and customer data is scattered arbitrarily across legacy SQL databases, unmanaged spreadsheets stored on employee laptops, and outdated local hard drives, no neural network is going to save you. Advanced models require clean, well-structured, continuously updated pipelines to function reliably.

I have watched well-funded companies hire expensive machine learning engineers and data scientists long before they audited their own record-keeping practices. Because the infrastructure wasn't ready, those data scientists spent their first year acting as highly paid digital janitors, scrubbing duplicate entries from customer relationship management systems and trying to match conflicting taxonomy tags. This is an enormous waste of specialized human capital.

Leaders must aggressively enforce data discipline before they write a single line of AI code. You need strict naming conventions that everyone follows. You need automated validation checks at the point of data entry. You need clear, unambiguous ownership assigned to specific individuals. Someone specific must be accountable for ensuring the product inventory database is logically consistent.

A lack of discipline here causes compounding errors later. If your purchasing data is consistently incorrect by a margin of five percent, a predictive forecasting model won't correct that error. It will bake that five percent hallucination into every future prediction it makes, scaling the mistake until you have a massive inventory crisis. Until you master simple database hygiene, worrying about generative reasoning is irrelevant.

Layer 3: The AI Capability Engine

Only after your infrastructure is mapped and your data is ruthlessly cleaned do you reach the layer that most people associate with "doing AI." The capability engine is where organizations determine exactly how they will generate automated insights, content, and predictions.

You naturally have three broad implementation choices here. You can consume standard, generalized models via commercial APIs. You can fine-tune existing open-weights models on your proprietary, newly-cleaned corporate data. Or, if you have immense financial and computational resources, you can train a foundational model from scratch.

For nearly all organizations, including large multinationals, training from scratch is financial suicide. The first two options are the only strategic moves worth considering.

For text and reasoning tasks, retrieval-augmented generation (RAG) is quickly becoming the baseline architectural standard. Instead of expensively forcing a language model to memorize your company's specific compliance policies through fine-tuning, you build an efficient search index of your verified documents. When an employee asks a question, the system retrieves the relevant, verified document and feeds it to the reasoning model right before generating the answer. This deterministic approach drastically reduces hallucination risks and keeps the answers grounded in your actual, controlled data.

But the capability engine is significantly more complex than just plugging into a language model. This layer includes all the necessary routing logic, the automated testing frameworks, and the rigid guardrails that prevent a customer service agent from accidentally offering unauthorized discounts. The technical leads must build this layer with extreme defensive pessimism. You must assume the model will eventually, and confidently, produce critical errors. You build the capability engine to catch and flag those errors before they ever reach a screen.

Layer 4: Decision Intelligence

Basic automation focuses on replacing hands. Decision intelligence focuses on assisting heads.

Far too many business conversations about artificial intelligence fixate exclusively on simple efficiency metrics. Companies want software to write cold sales emails faster, or to summarize hour-long meetings into bullet points automatically. Those are completely valid quality of life improvements, but they are commodities. If your competitor can buy the same email-writing software for thirty dollars a month, you have not created a competitive advantage. You have just lowered the baseline cost of mediocrity.

The true, lasting strategic value of artificial intelligence lies in dramatically improving the quality and speed of executive and managerial decisions. A proper decision intelligence layer connects the predictive power of your capability engine to the daily, high-stakes choices your leaders make.

If you manage an international logistics network, your AI system should not simply send an alert reporting that a cargo shipment is delayed. Basic reporting software does that. A decision intelligence system models the financial impact of three different rerouting options, factoring in current fuel costs, weather patterns, and penalty clauses. It then presents those options to a human manager along with explicit confidence scores.

This requires teaching an entire generation of managers how to interrogate probabilistic systems. A traditional software dashboard gives an executive a firm, absolute number. An AI forecast provides a likelihood and a margin of error. Leaders must learn to read confidence intervals. They must understand the limits of the training data. Most importantly, they must understand when to trust the machine's recommendation and when to override it using human context the machine lacks.

Moving from deterministic reporting to probabilistic forecasting represents a massive shift in corporate culture. At Layer 4, the system is no longer just a passive system of record. It becomes an active, heavily relied-upon advisor.

Layer 5: Operating Model Redesign

You can build the first four layers perfectly, invest millions into infrastructure, achieve pristine data hygiene, and deploy brilliant predictive models. But you will still fail to see a return on investment if your organization fundamentally refuses to change how it works.

For a deeper exploration of operating model redesign, read The AI Operating Model.

Adding an intelligent, high-speed system to an outdated, slow-moving organizational chart creates massive internal friction. When an AI tool starts drafting complex legal contracts or initial codebase architecture in a matter of seconds, the operational bottleneck instantly shifts from the drafting phase to the reviewing phase.

If your corporate manual still requires three different senior partners to manually read and approve every single contract sequentially, your overall cycle time does not actually improve. You have simply moved the traffic jam further down the road, while making your highly paid partners miserable by flooding their inbox.

Organizations must actively redesign their human operating models to absorb and utilize the speed of these new tools. You need to transition away from rigid departments to cross-functional pods where legal, compliance, and engineering team members sit at the same table and evaluate risks in parallel. You need distinct, permanent governance boards that evaluate both the ethical implications and the financial liabilities of every newly automated process before it scales.

The most common structural mistake organizations make in this era is officially assigning the "AI Strategy" back to the Chief Information Officer and expecting it to transform the business. The IT department can secure the infrastructure. They can procure the software licenses. But IT simply does not have the political authority to explicitly redesign the enterprise sales process or change how human resources hires candidates.

The Chief Executive Officer must own the operating model redesign. If the top leader attempts to delegate the structural human changes to middle management, organizational inertia will guarantee that the status quo wins.

Why the Sequencing Represents Non-Negotiable Reality

The fatal flaw for most executive teams is trying to adopt the applications without doing the invisible, heavy lifting of building the underlying stack. They purchase a high-end application positioned at Layer 3, point it at a broken internal database located at Layer 2, and then wonder why the expensive new application keeps confidently quoting outdated inventory numbers to important clients.

For a deeper exploration of building your strategy step by step, read How to Build an AI Strategy.

You have to build the competence from the bottom up. You start by critically auditing your cloud reliance and raw infrastructure. Then you enforce strict policies to clean your data and keep it clean. Only after establishing that discipline should you select your reasoning models and design your decision frameworks. Finally, you adjust your human workflows, approval chains, and governance policies to take advantage of the newly unlocked speed.

Building the AI Strategy Stack is not a fast process, and it does not yield immediate press releases. It requires significant political capital from the executive board and sustained investment over multiple quarters. But organizations that put in the hard work of building this foundation will stop running continuous, speculative pilot programs that never scale. They will deploy resilient, scalable systems that actually alter the trajectory of their business.